When deploying Ruckus Networks with SmartZone architecture, the access points run by default in bridge mode. All traffic is being bridged onto the wired network in a VLAN, but in some situation you may want all traffic to be tunneled for enhanced control functions like QoS for voice traffic, PCI compliancy or centralized breakout.

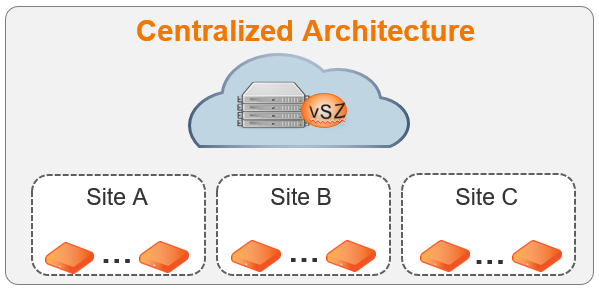

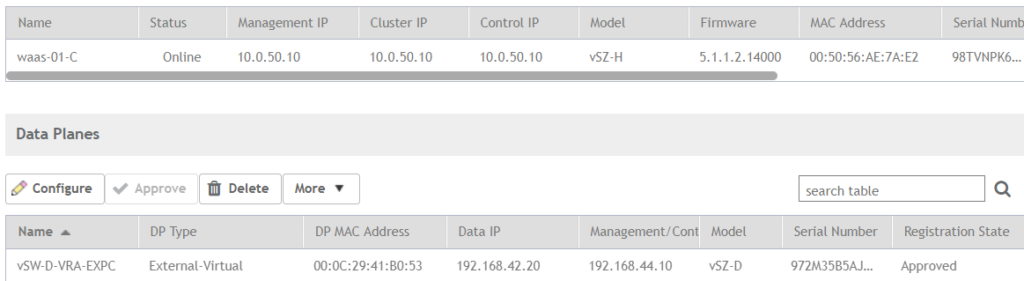

In our case we have a virtual Smartzone running in High-Scale mode on our VMware server isolated in DMZ, with this setup we can have separate sites managing their own wireless network. In fact this SmartZone appliance can run on Microsoft Azure or Amazon cloud services to deliver management for wireless networks with cloud management benefit.

From our server towards the remote sites we don’t have IPVPN or MPLS connectivity, so we actually simulate our own private cloud model. If one of these sites is planning to implement Voice over WLAN we can choose to configure the SSID to be bridged and put QoS on the switches for every AP uplink. But let’s imagine the wired hardware doesn’t fully allow QoS functionality or you can’t manage the switches, you want to tunnel the traffic to a central location from where you have the possibility to configure QoS. In those case we add a data plane into the environment of this specific site, this data plane can be a hardware appliance or a virtual appliance. For demo purpose we use a virtual appliance.

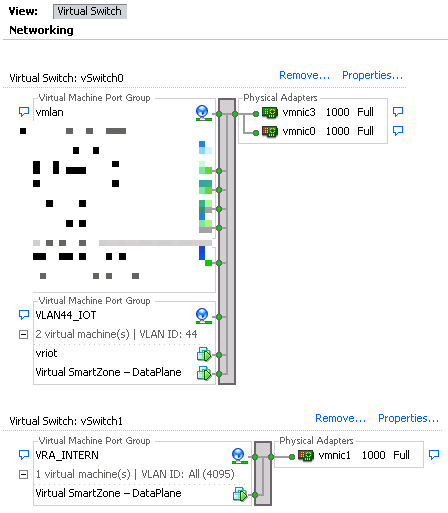

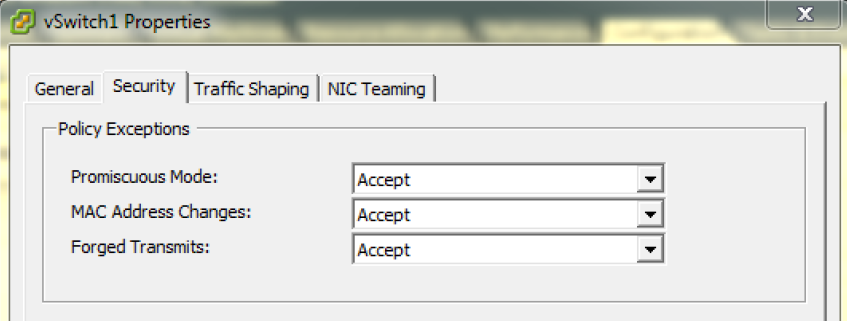

A virtual data plane by default has 2 interfaces, 1 is the data IP and the other is the management and control interface. The data IP is the IP address that is used by the AP’s to build the GRE tunnel to. This vSwitch has to be tagged with all VLAN ID tags to support all VLAN’s to tunnel through the dataplane if required. If you don’t tag this interface with ‘All VLAN ID’ and you change the SSID from bridged to tunneled the SSID will not be broadcasted anymore. I did it wrong the first time and tagged the data interface only with the same VLAN ID as the AP’s were using, it took me a long time to find what i did wrong. Also the vSwitch settings for the data plane interface should be configured in Promiscuous mode to allow tunneling.

The management and control IP is used to build a connection to the SmartZone High-Scale appliance on our VMware host. This interface is on tagged only with the specific VLAN ID from where you want the data plane to communicate to the SmartZone controller.

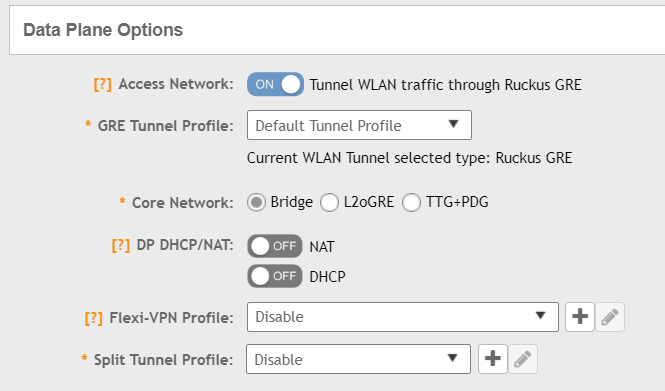

In the configuration of the SSID we have to enable Data Plane options to tunnel WLAN traffic, by default the “Default Tunnel Profile” is selected. The GRE tunnel profile decrypts 802.11 packets and can encrypt them again towards the Data Plane over the wired network

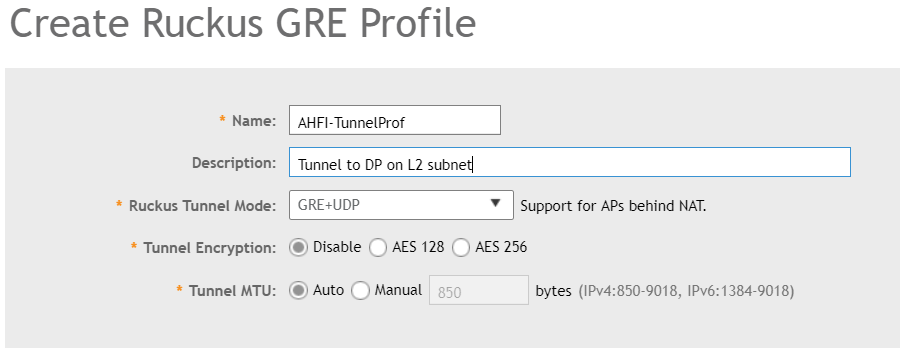

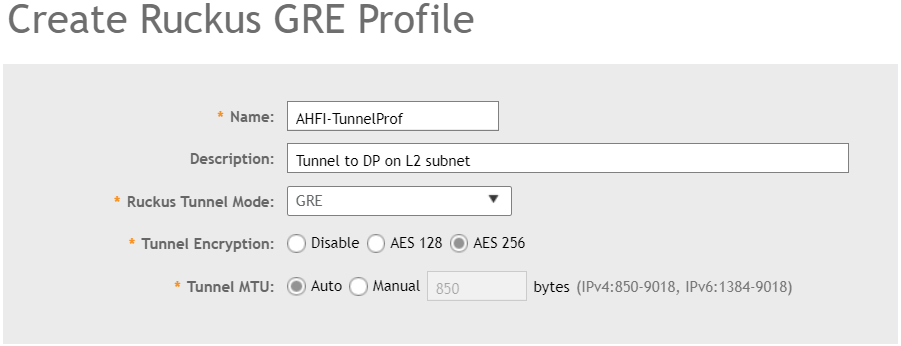

In the Default Tunnel Profile tunnel encryption is Disabled, only management traffic is encrypted and all other data is sent unencrypted to the Data Plane. To provide a higher security standard we want to encrypt also the data traffic, if the AP’s support the higher encryption options you can enable AES128 or AES256 tunnel encryption. Choosing the right Ruckus Tunnel Mode is based on the design of the network, in my case the APs and the Data Plane are on the same subnet so i need to select GRE. Remember you had the data IP and the management or control IP of the data plane. If my design was different and the Data Plane was located on my server i had to select GRE+UDP to support NAT.

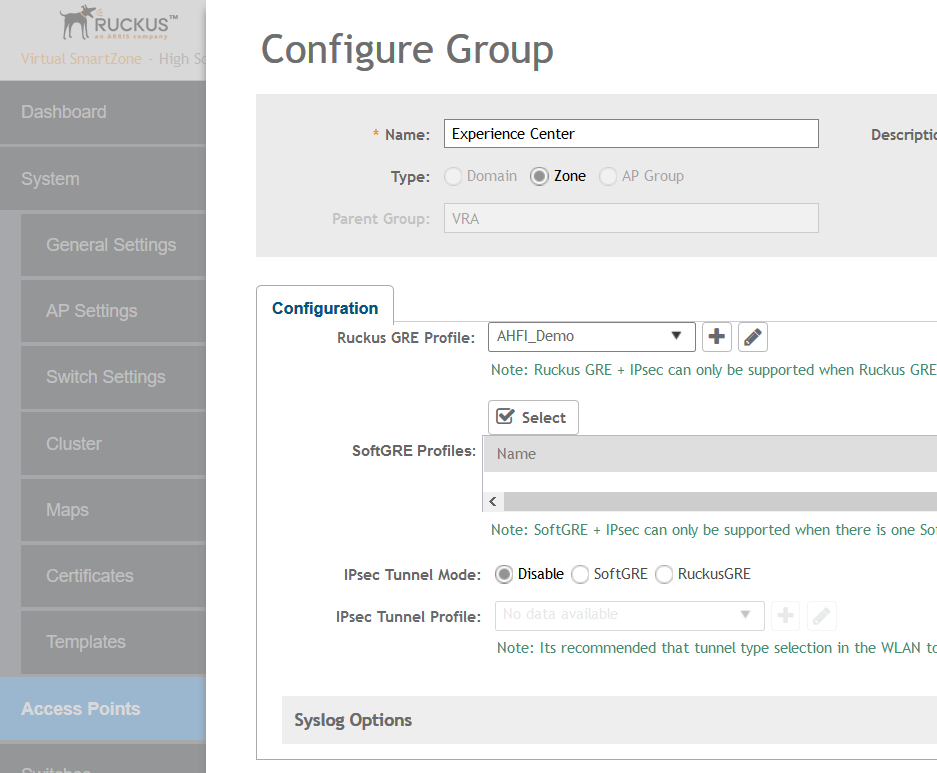

After we created this custom GRE profile we need to apply this on the Zone where we want to use it. At first i was looking in the SSID to check if i could find the new created GRE profile, since this is something from AP to Data Plane you need to configure this on AP Zone level and not on SSID level.

If you go into the SSID now you will see in the Data Plane options now we have set our AHFI_DEMO profile. We now have enable Data Plane tunneling for building A, but building B and C are still using bridged network access.

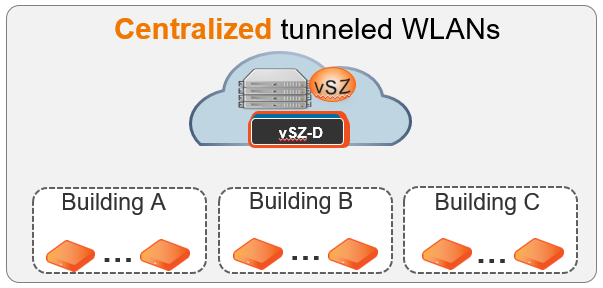

If you move the vSZ-D to the datacenter and you enable the GRE pofile for AP zones of building A, building B and building C you can do this centralized for the whole group.

Hopefully this was helpful for you to better understand the flexibility and the possiblity of the Data Plane options for Ruckus Networks.

This is a really awesome thank you for writing that. This has a lot more complexity than Cisco for sure. I was wondering if are able to answer few questions:

1- Do I absolutely need the Data Plane VSZ-D? Because in my VSZ-H I do see an option to create an SSID and tunnel it, can I not use only VSZ-H and setup the tunnel?

2- If I am understanding this correctly I have only a single interface and I do not see any specific VLAN’s tagged. For this to work we HAVE to tag the VLAN’s? Because I am running into the same issue of SSID not being broadcasted as soon as I tunnel the traffic.

Thank you

DO I need the Data Plane VSZ-D ? If you use a virtual SmartZone you do, I doesn’t matter if you use vSZ-E or vSZ-H but you do need a vSZ-D for tunneling purpose. With the hardware SmartZone appliance you can tunnel traffic through the ports on the appliance.

For me the reason traffic not being broadcasted was the Promiscuous mode on VMware. You must have this enable in the vSwitch configuration of VMware, at least I used VMware. And you must have tagged all possible VLANs from 1-4095 on the vSZ-D interface. vSZ-D has a management and a tunneling interface, on the tunneling interface all VLAN’s should be tagged and so does the SSID.

I have enabled Promiscuous Mode under vSwitch and then Security.

I also see “Data-Interface” which is where I enabled all VLANs. Do I need to enable Promiscuous Mode under there as well? I seem to be stuck here:

GRE over UDP: AP/SCG-D UDP port # 23233/23233

Keep Alive Interval/Retry-limit: 10/6

Keep Alive Interval2: N/A

Keep Alive Count: N/A

Force Primary Interval: N/A

——- Run Time Status (Debug) ——-

Current tunnel ID: N/A

Current failover mode: 0

Current connected SCG-D: N/A

Current Session UpTime: N/A

Current Keep Alive retry count: N/A

Number of tunnel (re)establishment: 0

FIPS mode: Disable

Reason on last re-establishment: can’t connect to SCG-D

Suggested action: check connectivity between AP and SCG-D

Ipsec state : IPSEC_BEGIN

Ping default gateway from last disconnection:

Tue Mar 31 16:57:06 UTC 2020

PING 10.0.0.1 (10.0.0.1): 56 data bytes

64 bytes from 10.0.0.1: seq=0 ttl=64 time=0.843 ms

64 bytes from 10.0.0.1: seq=1 ttl=64 time=0.422 ms

— 10.0.0.1 ping statistics —

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.422/0.632/0.843 ms

PING 10.101.32.11 (10.101.32.11): 56 data bytes

— 10.101.32.11 ping statistics —

2 packets transmitted, 0 packets received, 100% packet loss

Great blog post on SmartZone Data Plane